|

The print of the authHash.digest looks like valid to me. I am assuming I am not getting something right with my call to decode but I can not figure out what it is.

UnicodeDecodeError: 'utf8' codec can't decode byte 0x94 in position 0: invalid start byte #now I want to look at the string representation of the digest #look at the string representation of the binary digest

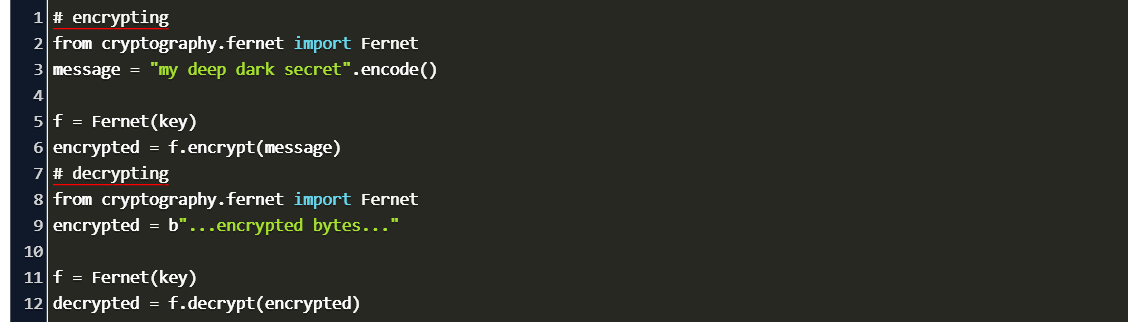

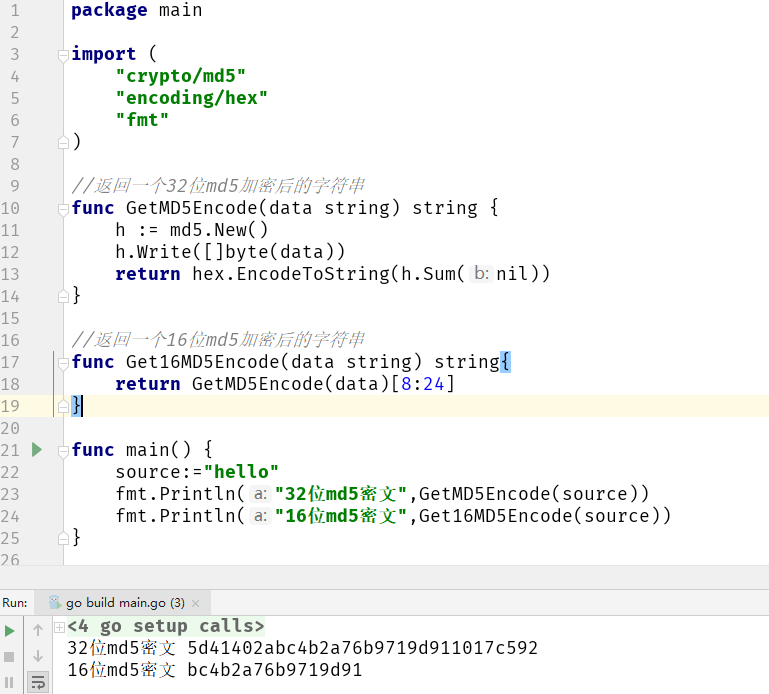

MD5 Algorithm/Function produces a hash value which is 128 bit. The parameters key, msg, and digest have the same meaning as in new (). The function is equivalent to HMAC (key, msg, digest).digest (), but uses an optimized C or inline implementation, which is faster for messages that fit into memory. The Hashlib functions that we will be exploring are MD5 and SHA1. Return digest of msg for given secret key and digest. Temp = str.encode(sandboxAPIKey + sandboxSharedSecret + repr(int(time.time()))) Unlike the modules discussed earlier in Hashlib decoding is a very difficult and time-consuming job this is why Hashing is considered as the most secure and safe encoding. #encoding because the update on md5() needs a binary rep of the string When using Linux or macOS, use export to set the environment. We set this outside of Python to ensure that a particular encoding is used everywhere.

The details are unique to each OS: We can make a general setting using the PYTHONIOENCODING environment variable. (not the real keys or secret) sandboxAPIKey = "wed23hf5yxkbmvr9jsw323lkv5g" You can use the hmac module in python to key-hash a message. Python will generally use our OS's default encoding for files and internet traffic. Encoding the same string using the MD5 algorithm will always result in the same 128-bit hash output. UnicodeDecodeError: 'utf8' codec can't decode byte 0xd3 in position 0: invalid continuation byteĪnd the position of the error changes depending on when I run the following code: MD5 hash function accepts a sequence of bytes and returns a 128-bit hash value. Md5 Encoding Python An MD5 hash is created by taking a string of an any length and encoding it into a 128-bit fingerprint. Running this code on Ubuntu 10.10 in Python 3.1.1

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed